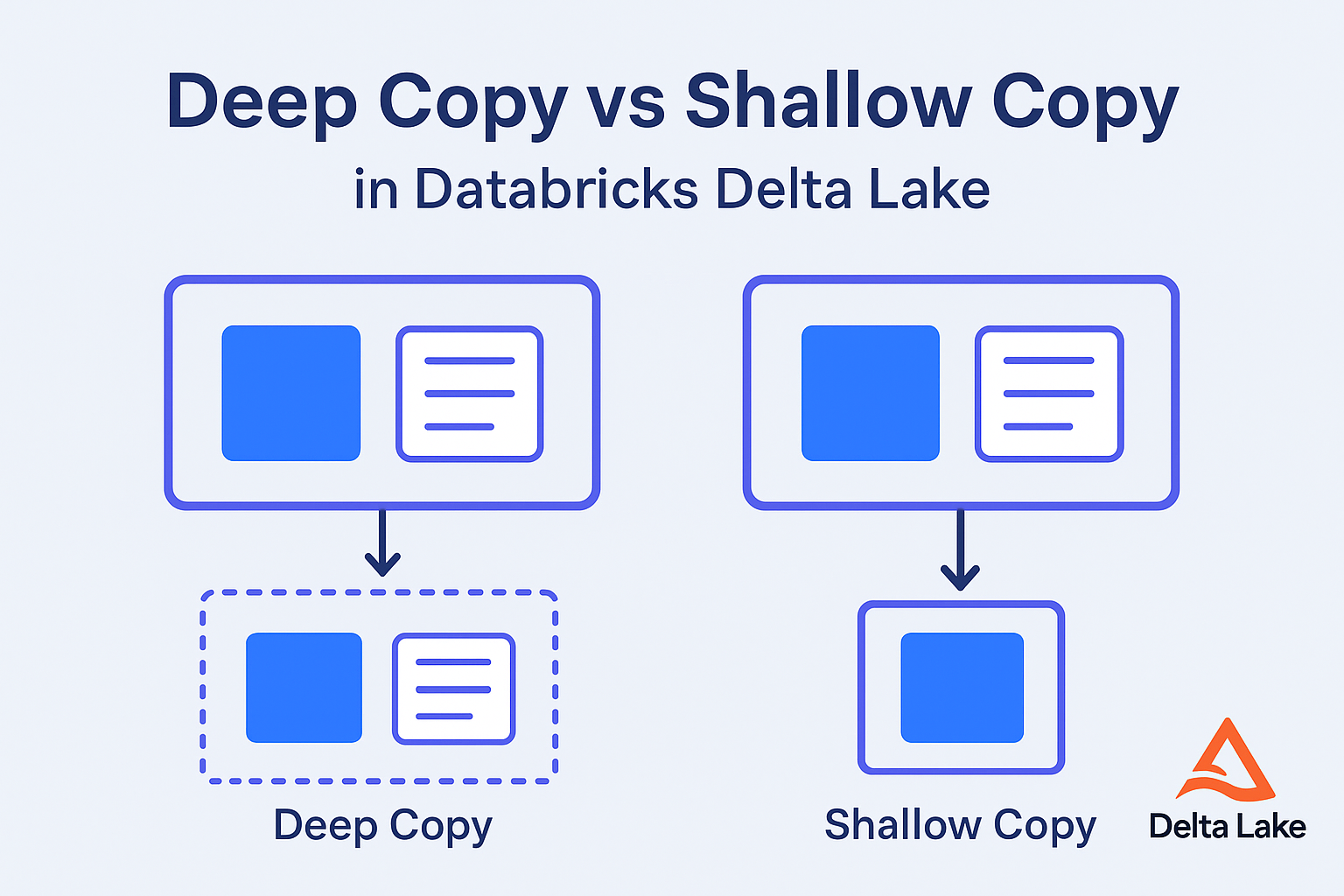

Deep Copy vs Shallow Copy in Databricks Delta Lake

When working with large-scale data in Databricks Delta Lake, it’s common to create copies of tables for testing, development, or archival purposes. However, not all

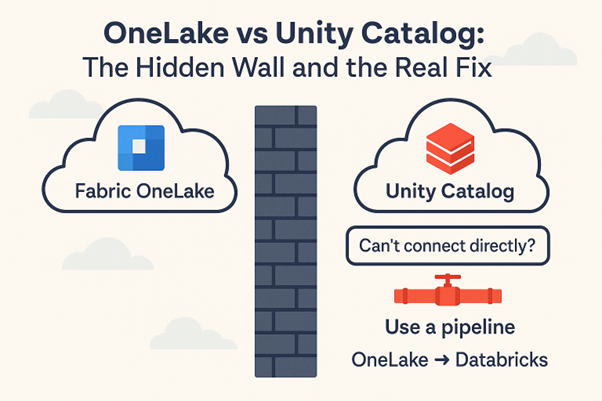

The Hidden Wall Between Fabric OneLake and Databricks Unity Catalog

These days, many teams use Microsoft Fabric OneLake for unified storage and Databricks Unity Catalog (UC) for data governance and analytics. But here’s the catch:

Databricks Clean Room — where shared insights meet uncompromised privacy

A Data clean Room is a secure space that enables businesses to work together on sensitive data without exposing or compromising it. By using robust

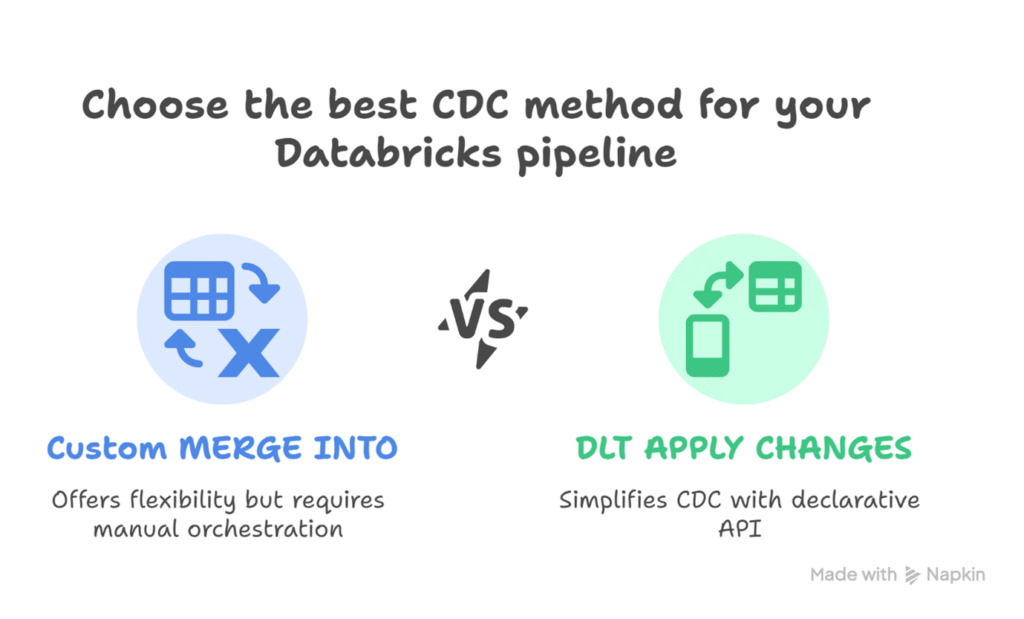

Handling CDC in Databricks: Custom MERGE vs. DLT APPLY CHANGES

Change data capture (CDC) is crucial for keeping data lakes synchronized with source systems. Databricks supports CDC through two main approaches: Custom MERGE operation (Spark

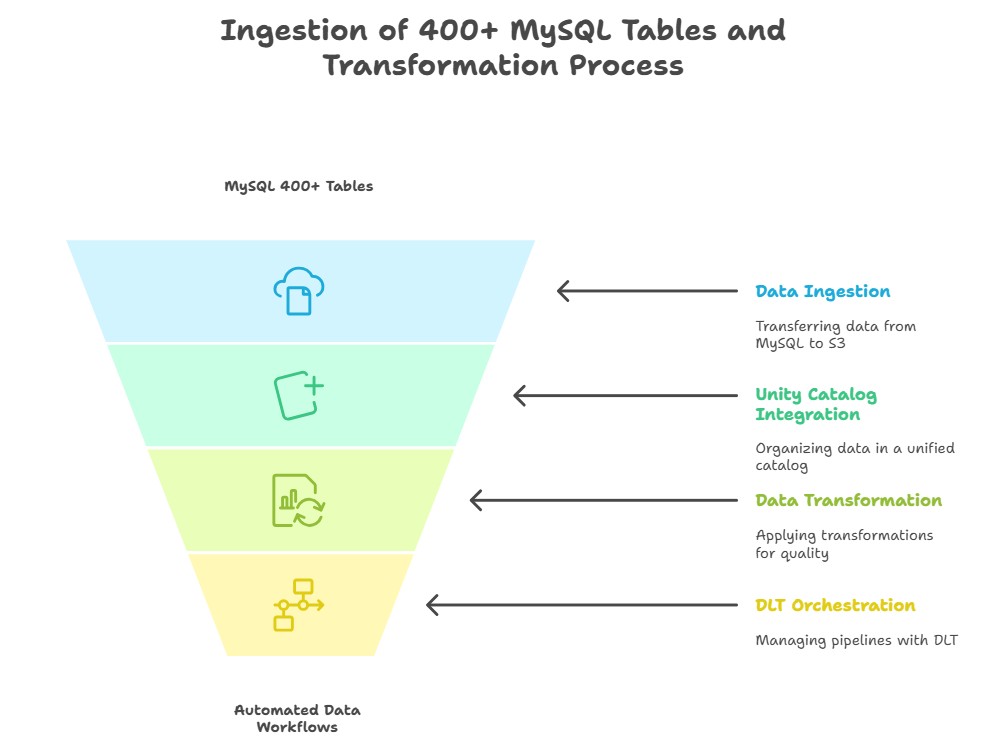

End-to-End Ingestion of 400+ MySQL Tables with Databricks Delta Live Tables

Ingesting and managing data from more than 400 MySQL tables on recurring schedules is a complex challenge. Traditional approaches often lead to pipelines that are

Streaming Made Simple with Databricks Debezium

Introduction In today’s fast-paced data-driven world, real-time data processing and change data capture (CDC) are crucial for businesses to make timely and informed decisions. Databricks,

Liquid Clustering in Databricks: The Future of Delta Table Optimization

Introduction — The Big Shift in Delta Optimization In the ever-evolving world of big data, performance tuning is no longer optional – it’s essential. As

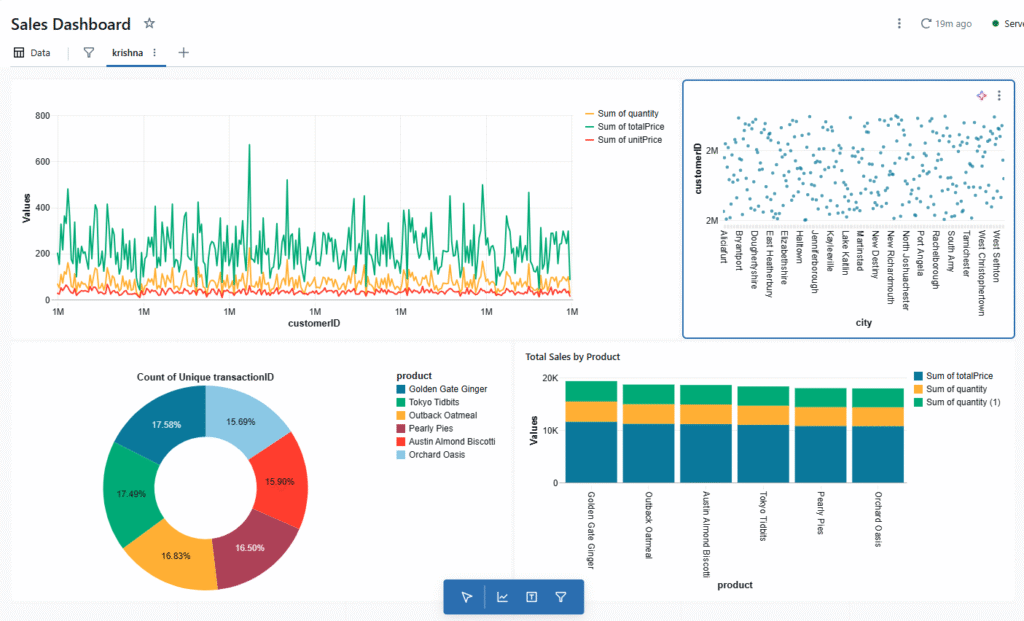

Turning Notebooks into Dashboards with Databricks

Why Databricks Notebook Dashboards Stand Out In the world of data-driven decision-making, dashboards are essential for turning raw numbers into actionable insights. While most dashboards

Triggering Azure Data Factory (ADF) Pipelines from Databricks Notebooks

Overview In modern data workflows, it’s common to combine the orchestration capabilities of Azure Data Factory (ADF) with the powerful data processing of Databricks. This

Delta Sharing: Let’s Share Seamlessly

Data became valuable the moment we started generating it at scale. As organizations began storing it by region — each with its own compliance rules, protocols, and