Building Your First Workflow in Databricks: A Step-by-StepGuide to Parameters, Triggers, and More

Have you ever wanted the data pipelines to function like clockwork, without any manual interventions, and with full control over when and how they run?

Databricks AI/BI Dashboards: Transforming Data into Intelligent Insights

In a world driven by data, organizations require more than static reports — they need real-time, intelligent visual analytics that guide decisions dynamically. Databricks AI/BI

Automated Schema Drift Detection in Databricks A Scalable and Configurable Approach

In modern data engineering, complex pipelines constantly process vast amounts of information from diverse sources. But one silent disruptor can destabilize everything—schema drift. When source

Databricks Pipeline Showdown: Delta Live Tables vs Jobs & Workflows

Organizations need data pipelines that are not only scalable and reliable but also simple to manage and evolve as business needs grow. Within the Databricks

Breaking Data Silos with Federated Data Sharing: The Future of Connected Analytics

In today’s fast-moving world, every sector—from finance and manufacturing to pharma—depends on data for decision-making and innovation. Yet much of that data remains locked in

Databricks to Azure SQL DB: Secure Authentication with Service Principals

In enterprise data platforms, Spark is the backbone for large-scale data processing, whether in Azure Databricks, Synapse, or standalone clusters. A common requirement is to establish a connection

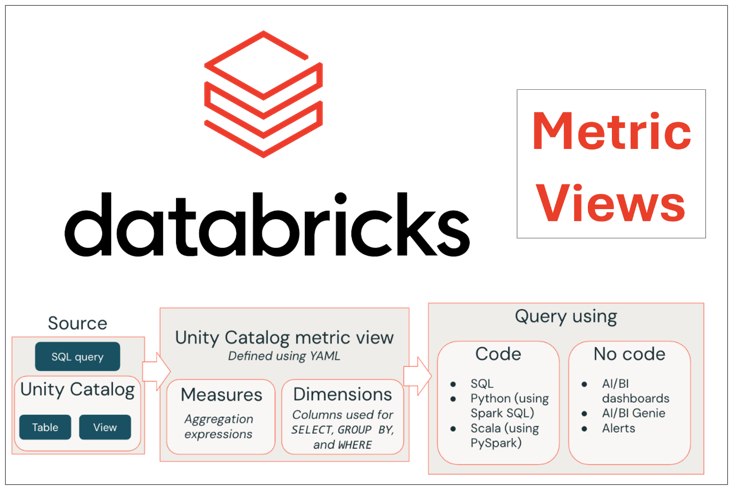

A Deep Dive into Metric Views for Beginners in Databricks Unity Catalog

Metric Views in Databricks Unity Catalog are a powerful way to create consistent, reusable business metrics, making it easy for teams to analyze key performance

Verify, Trust, Comply: The Future of Responsible AI on Databricks

Regulators expect timely, accurate disclosures; investors demand transparent ESG performance; customers reward brands that do the right thing and prove it. Yet inside most enterprises,

Talk Data to Me: Conversational AI Meets the Data Intelligence with Databricks

In today’s data-driven world, businesses sit on mountains of data, but turning raw data into actionable insights remains a major challenge. Multiple siloed systems, fragmented

Seamless Ingestion from Google Sheets to Databricks: A Step-by-Step Guide

In today’s data-driven world, enterprises handle massive amounts of continuously arriving data from various sources. Google Sheets often serves as a quick and easy way